I read an article from Doug Criss on CNN yesterday with the title "Hawaii's governor couldn't correct the false missile alert sooner because he forgot his Twitter password."1 1https://edition.cnn.com/2018/01/23/us/hawaii-governor-password-trnd/index.html It turns out that Governor Ige knew within two minutes that the alert was a false alarm, but (in the words of the article) "he couldn't hop on Twitter and tell everybody — because he didn't know his password."

There are a couple of different ways to take this story. The most common response I have seen is to blame the employee who accidentally triggered the alarm, and to forgive the Governor his error because who could have guessed that something like this would happen? The second most common response I see is a certain shock that the key mouthpiece of the Governor in this situation is apparently Twitter.

There is some merit to both of these lines of thought. Considering them in turn: it is pretty unfortunate that some employee triggered a state of hysteria by pressing an incorrect button (or something to that effect). We always hope that people with great responsibilities act with extreme caution (like thermonuclear war).

[caption id="attachment_2499" align="aligncenter" width="600"] How about a nice game of global thermonuclear war?[/caption]

How about a nice game of global thermonuclear war?[/caption]

So certainly some blame should be placed on the employee.

As for Twitter, I wonder whether or not a sarcasm filter has been watered down between the Governor's initial remarks and my reading it in Doug's article for CNN. It seems likely to me that this comment is meant more as commentary on the status of Twitter as the President's preferred 2 2perhaps we should say "only", as the President avoids press conferences. He's only held one press conference so far (http://www.presidency.ucsb.edu/data/newsconferences.php), preferring to keep to Twitter or to relay information through his White House Press Secretaries (first Sean Spicer, then Sarah Huckabee Sanders) and his White House Communications Directors (first Sean Spicer, then Mike Dubke [who resigned during earlier Russia controversy], then Sean Spicer again, then Anthony Scaramucci [who resigned/was forced out after showing gross incompetence as a spokesperson], and now Hope Hicks). medium of communicating with the People. It certainly seems unlikely to me that the Governor would both frequently use Twitter for important public messages and forget his Twitter credentials. Perhaps this is code for "I couldn't get in touch with the person who manages my Twitter account" (because that person was hiding in a bunker?), but that's not actually important.

A Parable for the Computing Age: Destroying a Production Database

When I first read about the false alarm in Hawaii and the follow-up stories, I was immediately reminded of a story I'd read on HackerNews3 3 https://news.ycombinator.com/item?id=14476421 and reddit4 4 https://np.reddit.com/r/cscareerquestions/comments/6ez8ag/accidentally_destroyed_production_database_on/ about a junior software developer starting a job at a new company. Bright-eyed and bushy-tailed, the developer begins to set up her5 5 Or maybe his? I don't know, but today I'll go with her development environment and build some familiarity with the database. Not quite knowing better, the developer used some credentials in the onboarding document given to her, and ultimately accidentally deleted the entire (actual, production) database.

The company immediately panics and blames her. It is her fault that she destroyed the database, and now the company has an enormous loss of data. They don't have backups, they're bringing in legal to assess damage, etc.

What is the moral of this cautionary Parable?

It is certainly NOT that one should blame the young developer.

The moral is that the system should not allow people who do not know any better to access (or delete) the production database, and further that there should be backups so that this sort of catastrophic incident cannot occur. Daily database backups and not including production database access credentials in onboarding documents are two steps in the right direction.

In a famous story from IBM,6 6 https://www.nytimes.com/2015/12/20/opinion/sunday/the-one-question-you-should-ask-about-every-new-job.html a junior developer makes a mistake that cost the company 10 million dollars. He walks into the office of Tom Watson, the CEO, expecting to get fired. "Fire you?" Mr Watson asked. "I just spent 10 million educating you."

The system and culture should be crafted to

- prevent these mistakes,

- quickly correct these mistakes, and

- learn from errors to improve the system and culture.

Back to Hawaii

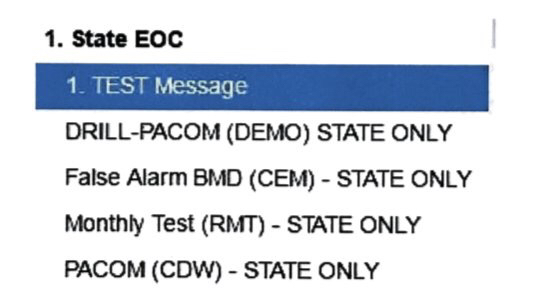

Stories in the news have thus far focused on inadequate prevention, such as the widely circulated image of poor interface design

(not to be confused with the earlier, even worse, version, which was apparently made-up7 7 https://arstechnica.com/information-technology/2018/01/the-interface-to-send-out-a-missile-alert-in-hawaii-is-as-expected-quite-bad/ ), or stories have focused on inadequate ability to quickly correct these mistakes (such as this CNN article indicating that the Governor's inability to tweet got in the way of quickly restoring peace of mine).

But what I'm interested in is: what will be learned from this mistake, and what changes to the system will be made? And slightly deeper, what led to the previous system?

US Pacific Command and the office of the Governor of Hawaii need to run a complete post-mortem to understand

- what led to this false alarm,

- what led to the nearly forty minutes between understanding there was a false alarm and disseminating this information, and

- what things should be done to address these issues.

Further, this information should be shared widely with the defense and alarm networks throughout the US. Surely Hawaii is not the only state with that (or a similar) setup in place. Can you not imagine this happening in some other state? Other nations and countries might take this as inspiration to self-reflect on their own disaster-alert systems.

This is a huge opportunity to learn and improve. It may very well be that the poor employee continually makes ridiculous mistakes and should be let go, or it may be that it requires too much concentration to not make an error and the employee can help foolproof the system.

Unfortunately, due to the sensitive nature of this software and scenario, I don't think that we'll get to hear about the most important part — what is learned and changed. But it's still the most important part. It's the important thing to be learned from this Parable for the Nuclear Age.

Info on how to comment

To make a comment, please send an email using the button below. Your email address won't be shared (unless you include it in the body of your comment). If you don't want your real name to be used next to your comment, please specify the name you would like to use. If you want your name to link to a particular url, include that as well.

bold, italics, and plain text are allowed in comments. A reasonable subset of markdown is supported, including lists, links, and fenced code blocks. In addition, math can be formatted using

$(inline math)$or$$(your display equation)$$.Please use plaintext email when commenting. See Plaintext Email and Comments on this site for more. Note also that comments are expected to be open, considerate, and respectful.